Day Three

Today, I taught a computer to see.

I did use a bit of code written by someone else, but that's practically unavoidable these days. You'll see what I mean in a second.

My computer (meaning my AWS P2 instance, not my actual computer) can now tell the difference between a cat and a dog with an accuracy of about 97%. So 3 out of every 100 times it will think that a cat is a dog, or that a dog is a cat. That's better than a lot of humans!

To do this, I used a pre-trained model called VGG-16. VGG-16 is a convolutional neural network developed by the Visual Geometry Group at Oxford. Why 16? Was it their 16th attempt at creating a convolutional neural network for image recognition? Apparently not. According to the VGG website, there are two flavors of VGG available, one that uses 16 weight layers, and one that uses 19 weight layers. Don't ask me what that means.

The VGG models sit on top of Keras, a library that makes it easier to talk to neural networks. Keras sits of top of Theano, a library that takes Python code and turns it into code that can interact with the GPU via CUDA (the nVidia programming environment) and cuDNN (the CUDA deep neural network library). See what I mean? It's turtles all the way down.

Anyway, thanks to all that abstraction, I was able to accomplish my goal with just seven lines of code:

from vgg16 import Vgg16

batch_size = 64

vgg = Vgg16()

batch_training = vgg.get_batches("/data/train/", batch_size=batch_size)

batch_valid = vgg.get_batches("/data/valid/", batch_size=batch_size)

vgg.finetune(batch_training)

vgg.fit(batch_training, batch_valid, nb_epoch=1)The training data contained a total of 23,000 cat and dog pictures, about the same as a mid-sized subreddit. My P2-enabled computer took ten minutes to train the model on the data. I'm curious to see how my actual computer would do.

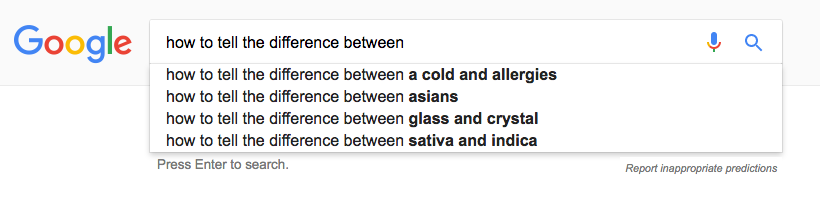

Tomorrow, I'll dive a little deeper into how VGG works, and see if it can tell the difference between all kinds of other things. Can a neural net tell the difference between dogs and horses? What about Zooey Deschanel and Katy Perry? 808s Kanye and Drake?

WHY DOES THIS HURT SO MUCH.

WHY DOES THIS HURT SO MUCH.